|

There have been many actions of hate in this country over the last year. More than ever, many minorities and citizens without privilege have been personally attacked due to their cultural or religious background. So I wanted to take this moment to talk about what it means to be American and “American” culture. I define American culture as a continuing patriarchal society with deep foundations that constantly need to be challenged. We are founded on worthy principles which are being twisted and pitted against what we say we stand for. The paper is different from the talk.

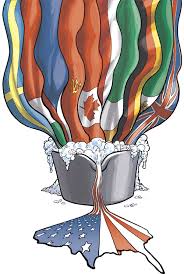

America is a country of immigrants. The true Americans had their land stripped from them and were forced to live in reservations. Being a country which started from immigrants, it is my thinking that we should be accepting and inclusive of those who come to our country for various reasons. The original “Americans” are Europeans who wanted freedom from the monarchy but we are placed in a potentially similar period now. These resistances are splitting the country in half but there is no new land to flee to and steal to become a new “free” country so the citizens of this “America” are going to have to figure out their problems on this soil. What is American culture? What is it that we bring that is unique to the world? It is not wrong and it should be encouraged to explore these various walks of life and learn how these various societies grew under their brand of leadership. These phobias against those that are different should be questioned because at least they are unique and when I think of American culture I think sterile and clean. We should welcome diversity to help color our lives and become a greater unified force that could change the world through tolerance and acceptance.

213 Comments

bvring the article, and more importantly, your personal experience mindfully using our emotions as data about our inner state and knowing when it’s better to de-escalate by taking a time out are great tools. Appreciate you reading and sharing your story since I can certainly relate and I think others can to

Reply

Rita Jackson

2/22/2023 03:54:05 am

Have you ever thought your partner was cheating on you – even when they weren’t – you’re not alone. It can be a very stressful situation to find yourself in. And while it may seem like trust issues are what’s leading you to constantly worrying your partner is cheating, experts and research say it could point to something deeper than that. It’s a slippery slope, but the good thing is you can overcome it. I found myself in those shoes and could not get myself enough peace until I sought out a professional-hands on, I contacted (( RECOVERYBUREAU at CONSULTANT . COM )) to help access my spouse device so I can get proof. Well it turned out it wasn’t just thoughts, I was being cheated on. I got complete access to my spouse device from mine, messages calls chats everything.

You can contact them to clear your mind from worry

Reply

Anthony Morphy

11/6/2023 04:32:35 pm

⚫️ ALL YOU NEED TO KNOW ABOUT RECOVERY OF LOST FUNDS, CRYPTO SCAM RECOVERY AND INVESTMENT RECOVERY ⚫️

Reply

Veronica

12/17/2023 07:36:05 pm

Reply

Mato Ray

3/31/2022 07:38:20 am

I'm just too happy that everything is in place for me now. I would gladly recommend the use of spell to any one going through marriage problems and want to put an end to it by emailing Dr Emu through emutemple@gmail.com and that was where I got the help to restore my marriage. Whatsapp +2347012841542

Reply

Rita Jackson

2/22/2023 03:59:38 am

HIRE A PROFESSIONAL HACKER FOR REMOTE PHONE SPYING AND CRYPTOCURRENCY RECOVERY

Reply

Wilson Fox

6/9/2022 12:31:40 pm

I suffered from what they called peripheral artery disease (PAD). I have been suffering for years, Me and my wife searched for a medical cure, and then we came across a testimony of a man who suffered the same and was cured by Dr Chief Lucky. So my wife and I contacted Dr Chief Lucky via an email and thank God he replied. I explained what was wrong and he sent me herbal medicines that helped heal me completely. I am happy to say that herbal medicine is the ultimate and Dr Chief Lucky I am grateful. You can contact him on his email: chiefdrlucky@gmail.com or whatsapp: +2348132777335, Facebook page: http://facebook.com/chiefdrlucky or website: https://chiefdrlucky.com/. Dr Chief Lucky said that he also specializes in the following diseases: LUPUS, ALS, CANCER, HPV, HERPES, DIABETES, COPD, HEPATITIS B, HIV AIDS, And more.

Reply

Jerry Anderson

9/30/2022 04:49:09 am

Hello my name is Jerry Anderson I’m 45 years old, I was tested positive of simplex herpes in 2002. I was having difficulties in every outbreaks, I have tried different kinds of drugs and treatment. Six months ago I was desperately online searching for helpful remedies for simplex herpes cure, I came across some helpful remedies on how dr. Irene has helped so many people in curing genital herpes with the help of his herbal medication. After contacting him here on his website for the past 2 months however, I’ve followed his prescription on how to take the herbal medicine and it stopped all outbreaks completely even when I took foods that could trigger it before, To my greatest surprise I was cured completely after going for a medical check up. I'll tell everyone reading this that you shouldn't be discouraged by the medical doctor’s words about no cure for such a virus, I could understand very well that they are being selfish for the reason that they are enjoying receiving income for every check up . With this miraculous healing I strongly testify that there is a cure for herpes with the help of herbs and roots. And also I noticed that he is honest with every word he says about his herbal treatment. Email address: dr.ireneherbalhome1@gmail.com visit Website http://www.drireneherbalhomeblog.wordpress.com

Reply

lewis

11/15/2022 07:06:39 am

Crypto Recover/ How do I recover my lost BTC

Reply

Rita Jackson

2/22/2023 05:34:44 am

HIRE A PROFESSIONAL HACKER FOR REMOTE PHONE SPYING AND CRYPTOCURRENCY RECOVERY

Reply

Nela Jayden

11/25/2022 12:54:48 pm

I had issues with my husband and he moved to another city and he cut off communication with me. I find every means possible to reach out to him so that we can resolve our differences but to no avail. His mom and siblings didn’t even know his where about. I was depressed and messed up emotionally and I had to seek help to get him back to me before another lady takes over my place in his life. So I had to contact DR ISIKOLO who I explained everything to and was willing to help me and he did. He did a love reconciliation bond for me and my man and unknown to me that the result will manifest after 48 hours as he promised. My man returned back and came for me and I was amazed that I can say now that the love and happiness has been restored and we are more happier together and all I see now is forever with him and I am so thankful to DR ISIKOLO for his kindness to me and other people out there. His email contact: isikolosolutionhome@gmail.com You can also WhatsApp him on: +2348133261196

Reply

Wayn Adams

12/1/2022 03:34:25 pm

Reply

Rita Jackson

2/22/2023 05:35:11 am

HIRE A PROFESSIONAL HACKER FOR REMOTE PHONE SPYING AND CRYPTOCURRENCY RECOVERY

Reply

12/7/2022 10:21:13 am

My humble regards to everyone reading comments on this platform and a great thanks to the admin who made this medium a lively one whereby everyone can share an opinion, I am Mrs. Beate Hester, from Brooklyn, NY. I'm a nurse by profession, I had a serious health issue that took me 8 months to get back to work, during this period of eight months I came across an advert with a lot of good comments under the trading broker platform. I was very interested so I decided to contact the trading platform and invest with them, after my first deposit I was told there is an increase in buying stocks so I had to send another money and this whole thing continued for a good 6 months. before I could realize I was already bankrupt when I discovered that I have been scammed for a total sum of $357,890, I tried to get some recommended hackers online but I ended up getting scammed of an additional $35k, it hurts me so much, I already gave it up but then I came in contact with Email:WIZARDWIERZBICKIPROGRAMMER@GMAIL.COM who I also message directly via WhatsApp(+1) (845)207? 8532 and he responded to me urgently and helped me to get back all my funds, everything was sent to me at once. Wizard Wierzbicki Programmer proves to me that scammed crypto can be recovered. All thanks to Genius Recovery Advocate Telegram and WhatsApp:+49 1575 8718600

Reply

WAYN ADAMS

12/8/2022 12:31:25 pm

If you need concrete cheating evidence on your unfaithful partner the best hand that got you cover is Pro Gilbert wizard service he is a professional in hacking. When I noticed some strange changes about my ex-wife after I contacted an STD from her I learned she has been cheating on me so I search for an app that can be used to clone her phone conversations and passwords but all was in avail, then I come in contact with ‘’prowizardgilbertrecovery(@)engineer.com’’ through a blog comment after connecting with him, he asked for the info and clone her Facebook, WhatsApp, and all her phone conversations within 24 hours, he did a very professional job without any traces, so if you are in need of a legit hacker for DELETING OF BLEMISHES FROM CREDIT REPORT, CREDIT SCORE INCREASE, PHONE CLONING, PHONE TAP, SPY HACK YOUR CHEATING SPOUSE TO FIND OUT WHAT THEY BEEN UP TO, EMAIL HACKS, WHATSAPP, MESSENGER AND OTHER SOCIAL MEDIA APP, DELETING OF CRIMINAL RECORD AND EVICTION HISTORY contact him via his ''prowizardgilbertrecovery(@)engineer.com // What //sApp (+1)/ 541- (240) 9985

Reply

Rita Jackson

2/22/2023 05:35:42 am

HIRE A PROFESSIONAL HACKER FOR REMOTE PHONE SPYING AND CRYPTOCURRENCY RECOVERY

Reply

WAYN ADAMS

12/8/2022 06:47:44 pm

If you need concrete cheating evidence on your unfaithful partner the best hand that got you cover is Pro Gilbert wizard service he is a professional in hacking. When I noticed some strange changes about my ex-wife after I contacted an STD from her I learned she has been cheating on me so I search for an app that can be used to clone her phone conversations and passwords but all was in avail, then I come in contact with ‘’prowizardgilbertrecovery(@)engineer.com’’ through a blog comment after connecting with him, he asked for the info and clone her Facebook, WhatsApp, and all her phone conversations within 24 hours, he did a very professional job without any traces, so if you are in need of a legit hacker for DELETING OF BLEMISHES FROM CREDIT REPORT, CREDIT SCORE INCREASE, PHONE CLONING, PHONE TAP, SPY HACK YOUR CHEATING SPOUSE TO FIND OUT WHAT THEY BEEN UP TO, EMAIL HACKS, WHATSAPP, MESSENGER AND OTHER SOCIAL MEDIA APP, DELETING OF CRIMINAL RECORD AND EVICTION HISTORY contact him via his ''prowizardgilbertrecovery(@)engineer.com // What //sApp (+1)/ 541- (240) 9985

Reply

Clement George

12/20/2022 02:53:06 am

Reply

Anelka James

2/22/2023 05:36:46 am

It’s really pathetic the rate at which people lose their hard earned money to cryptocurrency investment scam and due to loss of their private keys as well as passwords. Sometimes in December I lost all my crypto tokens to a fraudulent Chinese trading platform which took about $157,000 from me with the intent of getting me 10% profit monthly on my investment but turned out to be a trick. I became worried and searching for the most reliable fund retrieval firm who can assist me retrieve my funds without hassle or hidden fees. I’m glad after few days I met RecoveryBureau whom I hired via their official email at RecoveryBureau @ consultant . c0m. They asked for some basic info and within few hours I was able to completely recover my stolen funds with ease. This is the best service I ever got on the internet and this team were swift to getting the job done. Hit this firm up if you have lost your funds to crypto theft at Email: RECOVERYBUREAU @ CONSULTANT . C0M

Reply

Melinda Farr

12/24/2022 03:37:19 am

Reply

12/29/2022 05:13:11 pm

GET RICH WITH BLANK ATM CARD, Whatsapp: +18033921735

Reply

Taylor fey

1/14/2023 05:00:49 pm

The Best Cryptocurrency Recovery Hackers I've ever known are RecoveryMasters

Reply

Anelka James

2/22/2023 05:37:58 am

My husband and I lost $1.1m worth of Bitcoins and USDT to a fake cryptocurrency investment platform. A few months back, we saw an opportunity to invest in cryptocurrency to make huge profits from our investments. We contacted a broker online who was pretending to be an account manager for a forex trading firm, we invested a huge part of our retirement savings and business money into this platform not realizing it was all a scam to steal away our money. After weeks of trying to withdraw, this broker continued to request more money until we were broke and in debt, it felt as if we are losing our life. Fortunately for us, we saw an article about RECOVERY BUREAU we were not in a hurry to contact them but we did some research about their services and found out they could help us recover our money from these scammers, we contacted RECOVERY BUREAU and in a space of 72 hours, RecoveryBureau was able to recover everything, this company did a thorough investigation with the information we provided them and ensured that every penny was returned to us, it felt so unreal how they were able to recover everything we have lost. We are truly grateful for the help of Recovery Bureau and we are putting this out there to everyone who needs their services. RecoveryBureau@consultant(.)com RecoveryBureauc@gmail(.)com..

Reply

David Sarah

1/18/2023 02:21:50 am

I am David Sarah, Before I was scammed, I thought those who fell for them were fools, but when I lost $40k in a binary options scam, I didn't even know it had happened until weeks later. The site and services I used seemed so genuine, and everything felt legit, but when they stopped answering my emails and messages, that's when I knew something was up. I was going to accept defeat until a friend advised me to contact Pro Wizard Gilbert Recovery Service of which I did, they were incredibly helpful and worked hard to get all my money back. I'd highly recommend them to anyone who has been swindled of their currency.

Reply

Anelka James

2/22/2023 05:38:46 am

My husband and I lost $1.1m worth of Bitcoins and USDT to a fake cryptocurrency investment platform. A few months back, we saw an opportunity to invest in cryptocurrency to make huge profits from our investments. We contacted a broker online who was pretending to be an account manager for a forex trading firm, we invested a huge part of our retirement savings and business money into this platform not realizing it was all a scam to steal away our money. After weeks of trying to withdraw, this broker continued to request more money until we were broke and in debt, it felt as if we are losing our life. Fortunately for us, we saw an article about RECOVERY BUREAU we were not in a hurry to contact them but we did some research about their services and found out they could help us recover our money from these scammers, we contacted RECOVERY BUREAU and in a space of 72 hours, RecoveryBureau was able to recover everything, this company did a thorough investigation with the information we provided them and ensured that every penny was returned to us, it felt so unreal how they were able to recover everything we have lost. We are truly grateful for the help of Recovery Bureau and we are putting this out there to everyone who needs their services.

RecoveryBureau@consultant(.)com

RecoveryBureauc@gmail(.)com..

Reply

Farrah Tiana

1/19/2023 11:38:06 am

After falling victim to fraud twice trying to recover my stolen USDT, I gave up on the possibilities of ever being able to have it recovered until Expressline recovery was recommended on Quora and I was very grateful to have come across the article as they recovered 85% of my stolen USDT and so I decided to share this for anyone else that might be in need of their services...you can reach them on Expresslinerecovery AT gmail DOT com

Reply

Gordon Braun

1/21/2023 12:33:41 am

After falling victim to a cryptocurrency investment scam, my family and I were left with nothing after these swindlers stole $107,000 in USDT and Bitcoins from us. We were so lucky to come across a post about Cyberwall Fire, a cryptocurrency and funds recovery company with plenty of experience in cybersecurity. Cyberwall Fire was able to recover all of our funds, and with the information we provided, they were tracked down and reported to the appropriate authorities. I highly recommend Cyberwall Fire for your cryptocurrency recovery.

Reply

Linda Cromwell

1/21/2023 09:18:38 pm

Back in 2019 my credit score was horrible , i had so many collection accounts and would noy get approved for anything.

Reply

Kroos David

1/22/2023 07:33:00 am

The process by which to expunge a criminal record varies from state to state. However, there are certain elements that remain the same in all expungement proceedings. Although each state has its own procedures and requirements for expungement, all require the person petitioning for expungement to have completed their probation and paid all fines, taken all court appointed classes, remained in good standing and must have not been convicted of a second crime during the rehabilitation period, but to save yourself months and years of filing for the expungement process, which could be very time consuming, I vouch Luciferhacker750@yahoo.com, he can help you do this in just 7 working days, message him and it helps to let him know referred you contact him via whatsapp +1(315)388-2001

Reply

Esther Timothy

1/22/2023 05:23:24 pm

They are heartless scammers, they ruined my life, by making me develop interest to invest my hard earned money. I deposited 10.7850 euros in June 2020, which was later turned to 98,908.00 euros including my pay out bonus, there was an impressive improvement in few days, 2 months later I had a car accident and needed money to pay my insurance access, Suddenly I was sent from Pillar to post, i tried reaching out to them to collect the money i invested to pay off my debts, they cut the live chats and got harassed from 1 to the other, until they told me I will forever be poor, then i realized that i was being scammed. I just wanted my money back! I was advised by a friend to seek for help from a recovery management to assist me recover my invested funds, God so kind i was able to reach out to a recovery guru VIA jamesmckaywizard@gmail.com or what'sapp +1 (507) 414-7049, i was able to recover my funds with the help of Mr JAMESMCKAYWIZARD his an expert on crypto/forex and bitcoin recovery, I feel obligated to recommend him and his team, their recovery strategies, and for working relentlessly to help recover my funds. feel free to reach out to him via his email address: jamesmckaywizard@gmail.com or what'sapp +1 (507) 414-7049, and will guide you on how to recover your invested capital, i advise everyone to be careful with this heartless stealing people.

Reply

Maria Bauer

1/23/2023 12:44:48 am

Hello everyone, I’m Maria Bauer from Brisbane, Australia.

Reply

Carol krason

1/25/2023 09:39:50 am

Reply

Shanika Stewart

1/27/2023 01:37:46 am

Reply

1/31/2023 03:37:31 am

CONTACT INFO EMAIL: Wizardwebrecovery(@)programmer(.)net

Reply

2/2/2023 07:32:33 am

I'm Truly Grateful To RecoveryMasters They Were Able To Recover My BTC

Reply

Kurt

2/2/2023 05:04:24 pm

Before I say anything else, I had my records that had been a pain in my neck all cleared by this genius Albert Vadim ( Vadimwebhack@gmail.com ). Felonies, mostly drug related but that was years ago and even though I have been clean for years, these records kept me unemployed for years until I got frustrated and decided to surf the internet for help. I am more than grateful I found this amazing soul Albert. I have a great job, he also helped get my credit score up to 860. I honestly can't thank him enough, he literally saved me and my family. I consider him family at this point.

Reply

2/5/2023 04:54:00 pm

I'm Truly Grateful To RecoveryMasters They Were Able To Recover My BTC

Reply

Mavis Wanczyck

2/5/2023 08:38:54 pm

Money they say never sleeps as I became vastly wealthy overnight. Being a winner of a multi-million dollar lottery certainly will be a life-changing event for almost every single lottery winner. My name is Mavis Wanczyck from Chicopee, Massachusetts, the famous PowerBall lottery winner of $758 million (£591m). I know many people would wonder how I had won the lottery. Would you believe me if I told you that I did it with spell casting? I met this famous spell caster known as Doctor Odunga and he was the one who did it for me. As shocking as it was to me, my famous comment to the press was “ I’m going to go and hide in my bed.” Never did I believe that Doctor Odunga made me wealthy overnight. If you want to have your chance of winning and becoming very wealthy just like me, contact Doctor Odunga at odungaspelltemple@gmail.com OR WHATSAPP HIM at +2348167159012 and you will be lucky. My advise is when you win, do not fail to appreciate his good work too. Thanks for reading and hope to see you at the top

Reply

Maria Willie

2/7/2023 03:55:36 pm

DR. TRUST IS THE BEST SPELL CASTER ONLINE WHO RESTORED MY BROKEN RELATIONSHIP AND I HIGHLY RECOMMENDS DR. TRUST ANYONE IN NEED OF HELP! WHATSAPP +14242983869

Reply

Malcolm

2/7/2023 05:51:12 pm

I never imagined it was possible for my credit score to be raised from low 300s to over 780 within 14 working days. Special thanks to this professional Albert Vadim. I honestly doubted this was ever possible but I gave it a shot because I was dying in silence. This man helped me get back on my feet financially and otherwise. IF YOU NEED HELP, YOU CAN REACH HIM VIA EMAIL: VADIMWEBHACK@ GMAIL,COM

Reply

2/7/2023 10:23:52 pm

Best Hackers Contact RecoveryMasters

Reply

Maria Jakes

2/8/2023 11:18:49 pm

Looking for a real life hacker that never disappoints? well here is it they helped me monitor my Husband's phone when I was gathering evidence during the divorce. I got virtually every information he has been hiding over the months easily on my own phone: the spy app diverted all his whatsapp, face-book, bank transfers and multimedia text messages, sent and received through his mobile phone/laptop: I also got his phone calls and deleted messages. he could not believe his eyes when he saw the evidence because he had no idea he was hacked. this team do all types of mobile hacks, bitcoin recovery or money lost to online scammers/computer hacks, you get unrestricted and unnoticeable access to your partner/ spouse/ anybody’s social account, email, E.T.C Getting the job done is as simple as sending an email to jamesmckaywizard@gmail.com or what'sapp: +1 507 414 7049 and thank me later their services are cool and easily accessible.

Reply

2/9/2023 05:37:17 am

I lost about $585,000.00 USD to a fake cryptocurrency trading platform a few weeks back after I got lured into the trading platform with the intent of earning a 15% profit daily trading on the platform. It was a hell of a time for me as I could hardly pay my bills and got me ruined financially. I had to confide in a close friend of mine who then introduced me to this crypto recovery team with the best recovery Albert Gonzalez Wizard i contacted them and they were able to completely recover my stolen digital assets with ease. Their service was superb, and my problems were solved in swift action, It only took them 48 hours to investigate and track down those scammers and my funds were returned to me. I strongly recommend this team to anyone going through a similar situation with their investment or fund theft to look up this team for the best appropriate solution to avoid losing huge funds to these scammers. Send complaint to Email: info@albertgonzalezwizard.online or albertgonzalezwizard@gmail.com WhatsApp: +31685248506 Telegram: +31685248506

Reply

Wayn Scott

2/9/2023 06:40:18 pm

Best Hackers Contact RecoveryMasters

Reply

ANITA GETTER

2/10/2023 05:40:21 am

I URGENTLY NEED A LOVE SPELL CASTER TO BRING BACK MY EX-LOVER BACK FAST CONTACT DR EHIMARE VIA EMAIL: DOCTOREHIMARE5@GMAIL.COM OR WHATSAPP HIM +2349018966808

Reply

ANITA GETTER

2/10/2023 05:43:38 am

I URGENTLY NEED A LOVE SPELL CASTER TO BRING BACK MY EX-LOVER BACK FAST CONTACT DR EHIMARE VIA EMAIL: DOCTOREHIMARE5@GMAIL.COM OR WHATSAPP HIM +2349018966808

Reply

TASHIA MARIE

2/10/2023 08:22:21 pm

I WANT MY EX LOVER BACK WITH THE HELP OF A POWERFUL SPELL CASTER CONTACT DR EHIMARE VIA EMAIL: DOCTOREHIMARE5@GMAIL.COM OR WHATSAPP HIM +2349018966808

Reply

Jimmy Wheeler

2/11/2023 12:39:54 am

Hello everyone, I’m Jimmy Wheeler from Sydney, Australia. I’m 64 years old and I own a Carwash business. A few months back, I invested 390,000 AUD worth of Bitcoins & Ethereum into the cryptocurrency platform CryptoXStock and later found out this platform is a scam and has defrauded other people including myself of our money. I fell sick and was in the hospital depressed for weeks. I got in touch with the authorities and there was nothing they could do to help me get back my money until I saw an article online about Spyweb Cyber Service, a cyber security expert who has tremendous reviews of how they have helped victims of internet scam to recover their money, I didn’t hesitate to contact them and provided all the information they needed. To my surprise, Spyweb was able to recover my money and trace down those scammers in less than 72 hours. I’m truly grateful for the services of Spyweb Cyber and I’m recommending them to every victim of an internet scam who wishes to recover their money.

Reply

2/12/2023 12:55:52 pm

HOW TO RECOVER LOST CRYPTOCURRENCY /CONTACT RECOVERYMASTERS

Reply

Heather Mcginn

2/12/2023 05:42:59 pm

The very purpose of investing is to gain profit but it become painful when you lose your investment, I see people complaining of getting their investments lost when investing in binary option trading. just the same way i lost mine, i equally know how it feels like when you lose your funds while trying to earn some profits, i had invested a total of $108k usd to a binary trading company not knowing that they had some corrupt brokers who choose to make away with peoples investment. they seized every damn coin that i had invested and kept on requesting for more funds to be deposited before i can have my total earnings. their silly excuses for demanding more deposit didn't go well with me and a part of me was already letting me know that i was in the wrong direction, i stopped talking with them for a few days and started looking for a smart means to get my invested funds back. and i was finally able to get back all my funds through a Recovery Specialist, with his trading experience and recovery Expertise i was able to get back my lost funds, All thanks to their immense service. if you ever lost your funds while investing it only Best you Contact ROOTKITS RECOVERY SPECIALIST via email on R O O T KITS 4 @ gmail .com or Telegram him on user iD: ROOTKITS7

Reply

Josie Wilson

2/13/2023 01:37:28 am

Reply

blank atm card

2/13/2023 01:09:45 pm

We have specially programmed ATMs that can be used to withdraw money at ATMs, shops and points of sale. We sell these cards to all our customers and interested buyers all over the world, the cards have a withdrawal limit every week.

Reply

Nancy Graves

2/13/2023 09:21:20 pm

I’m Nancy Graves from Richmond, Kentucky, and a few months ago, I invested some money into a cryptocurrency platform, I was told by the account manager to make payment in Bitcoins and Ethereum worth $173,000 hoping I would make huge returns on my investment. After some time, I tried to withdraw but I was not given access to my account and was told to put in more money. I found out this platform is a scam and these fraudsters have defrauded me of my money. I’m truly grateful to Spyweb Cyber service, a company that helps victims of cryptocurrency scams to recover their money, I was fortunate to come across their review on an article and I contacted Spyweb, I provided all the information they needed and my money was recovered within 72 hours. I’m truly grateful for the work of Spyweb and how they recovered my money. I highly recommend them to everyone.

Reply

Trevor Maynard

2/14/2023 12:42:30 am

My credit score was moved from low 400s to over 860 by this genius Albert Vadim within 7 working days. All derogatory reports gone. At first I thought this was too good to be true but it was my only hope at the time so I took my chances and I can say its one of the best decisions I ever made. So on behalf of my wife and my kids, I am taking this time to publicly thank him for helping us get loan approvals which helped us get a beautiful house and also drop his contact for anyone who wishes for a change.

Reply

Atin Fernando

2/14/2023 03:40:36 am

I consulted Wize Safety Recovery when I was involved in a bitcoin trading scam. It was unknown to me that there were systematic ways to retrieve scammed funds. I came across a business website who promised a huge return on investment. I was so convinced. The website was good and after all the convincing, I ended up depositing 150,000 USD, unfortunately, I fell into the wrong hands. I was really devastated until I sent an email to an expert who came highly recommended - Wizesafetyrecovery @ gmail com. I gave him all the information about the events, and within a few working days, they restored 85% of my BTC back to my wallet. I was shocked and glad at the same time. With trustworthy people like Wize Safety Recovery, I can 100% guarantee your money will be recovered back.

Reply

2/14/2023 10:49:20 am

HACKANGELS ARE CURRENTLY HELPPING CRYPTO SCAM VICTIMS RECOVER THEIR FUNDS.Hello everyone My name is James William, and I was a victim of a scam. Before I learned about HACKANGEL, a business that offers recovery services for all your money stolen through cryptocurrency, I wasn't sure it was possible to get back money that had already been sent out through bitcoins, USDT, and other cryptocurrencies. Just like other victims, I invested my resources in an endeavor in the hopes of earning substantial profits until I was informed that I would have to keep doing so even in the absence of any gains. After contacting HACKANGEL, I was able to retrieve everything, and I'm sincerely appreciative that there are people like them to assist put an end to all these investment crooks.

Reply

2/14/2023 12:26:33 pm

HACKANGELS ARE CURRENTLY HELPPING CRYPTO SCAM VICTIMS RECOVER THEIR FUNDS.Hello everyone My name is James William, and I was a victim of a scam. Before I learned about HACKANGEL, a business that offers recovery services for all your money stolen through cryptocurrency, I wasn't sure it was possible to get back money that had already been sent out through bitcoins, USDT, and other cryptocurrencies. Just like other victims, I invested my resources in an endeavor in the hopes of earning substantial profits until I was informed that I would have to keep doing so even in the absence of any gains. After contacting HACKANGEL, I was able to retrieve everything, and I'm sincerely appreciative that there are people like them to assist put an end to all these investment crooks.

Reply

JAMES WILLIAM

2/14/2023 12:27:52 pm

HACKANGELS ARE CURRENTLY HELPPING CRYPTO SCAM VICTIMS RECOVER THEIR FUNDS.Hello everyone My name is James William, and I was a victim of a scam. Before I learned about HACKANGEL, a business that offers recovery services for all your money stolen through cryptocurrency, I wasn't sure it was possible to get back money that had already been sent out through bitcoins, USDT, and other cryptocurrencies. Just like other victims, I invested my resources in an endeavor in the hopes of earning substantial profits until I was informed that I would have to keep doing so even in the absence of any gains. After contacting HACKANGEL, I was able to retrieve everything, and I'm sincerely appreciative that there are people like them to assist put an end to all these investment crooks.

Reply

Abelard Bruno

2/15/2023 01:57:21 am

I invested on a platform that has positive reviews everywhere to deceive more people. At first it was actually going well until I decided to make a big withdrawal, they had let me withdraw a small amount in the beginning and this even made me trust this company even more until i needed to make a big withdrawal, they denied this and instead lured me into making more deposits as “company maintenance fee, Tax fee and so” in order to approve my withdrawal. This went on for another 2 weeks and i had already lost a fortune and it didn’t look like they were going to release anything i got more worried and aggressive about recovering my funds back, so one day i decided to go on a cyber hunt for a credible firm, i did deep research and came across the ROOTKITS RECOVERY FIRM and their services. i reached out to their contact detail R O O T K I T S 4 @ G M A I L . C O M to file a complaint on charge back i was well responded to and had a deep conversation on how i lost my money to NABCRYPTO investment everything seems so unbelievable while they assured me of getting everything have lost, i was asked questions on my mode of payment and evidences that shows a transaction occurred between both parties and if any agreement was breached or stated, after all these, within the early hours of the morning I got my lost btc back in my wallet, these guys are amazing. you can get them also on Telegram at ROOTKITS7

Reply

Roselyn Mendez

2/15/2023 06:30:37 am

I met this guy at a coffee shop one morning while I was driving to work , him and the girlfriend was talking about an investment where the man lost over $700,000 worth of Bitcoin but the fun part was that he was able to recover it all again , when I heard him saying this , I waited outside for them to finish up and i quickly approached him and was Like “ I overheard you discussing with your friend on how you lost your investment and was able to recover all back “ I was just curious to know how he did that because I have just recently been scammed as well and ever since then I’ve been depressed till I met this young man , he now told me how he was able to come across a hacking agent who helped him retrieve back his lost investment , me hearing his story and how everything went down with the hacking agent I quickly requested for the Agents contact which he gave to me , I’d say that was a miracle because I didn’t expect that I was ever going to find someone who had been in same situation as I am not to talk of being able to recover the lost money back but all this happens before my very eyes , I contacted the Agent through Email : KNIGHTHOODBOT @ gmail .com , and from that very point everything turned around for the better for me , I lost $318,000 to the scammers in total but when the agent was done , not even a dime was left behind for them, all was retrieved back to me and I can’t really put in writing how excited I am . I am here with my story to help people out there who has lost hope in recovering their money lost to scammers . Give this team the chance and worry less .

Reply

2/15/2023 07:02:25 am

My $112,000 worth of cryptocurrency was stolen by con criminals impersonating a group of investors. A few months ago, I was tricked into the trading platform with the prospect of daily profits of 20%. When it was time to withdraw, they ordered that I make additional payments on their website. I completed all the fees, but I still couldn't withdraw money from my profits. At this stage, I understood that I and many other people had been misled. Because of this, I felt so depressed that I almost lost focus at work. As a result, I got to know Spyware Cyber Recovery agency, a clever and quick hacker. I emailed them and explained my situation to them. After maintaining contact with these fake investors for a few hours, Spyware Cyber recovered my stolen cryptocurrency. For assistance with recovering stolen digital assets, including cryptocurrency, send your complaint via, spyware(@)cybergal.com / contact(@)cybegal.com / WhatsApp Number: +19892640381

Reply

2/15/2023 09:44:43 am

My $112,000 worth of cryptocurrency was stolen by con criminals impersonating a group of investors. A few months ago, I was tricked into the trading platform with the prospect of daily profits of 20%. When it was time to withdraw, they ordered that I make additional payments on their website. I completed all the fees, but I still couldn't withdraw money from my profits. At this stage, I understood that I and many other people had been misled. Because of this, I felt so depressed that I almost lost focus at work. As a result, I got to know Spyware Cyber Recovery agency, a clever and quick hacker. I emailed them and explained my situation to them. After maintaining contact with these fake investors for a few hours, Spyware Cyber recovered my stolen cryptocurrency. For assistance with recovering stolen digital assets, including cryptocurrency, send your complaint via, spyware(@)cybergal.com / contact(@)cybegal.com / WhatsApp Number: +19892640381

Reply

JAMES WILLIAM

2/15/2023 01:17:53 pm

HACKANGELS ARE CURRENTLY HELPPING CRYPTO SCAM VICTIMS RECOVER THEIR FUNDS.Hello everyone My name is James William, and I was a victim of a scam. Before I learned about HACKANGEL, a business that offers recovery services for all your money stolen through cryptocurrency, I wasn't sure it was possible to get back money that had already been sent out through bitcoins, USDT, and other cryptocurrencies. Just like other victims, I invested my resources in an endeavor in the hopes of earning substantial profits until I was informed that I would have to keep doing so even in the absence of any gains. After contacting HACKANGEL, I was able to retrieve everything, and I'm sincerely appreciative that there are people like them to assist put an end to all these investment crooks.

Reply

Sheila Hagan

2/15/2023 09:06:09 pm

Good day everyone, I’m Sheila Hagan from Leeds, UK.

Reply

Mosley Alan

2/17/2023 07:51:17 am

Truth be told , crypto currency investment is quite profitable only if you are investing with the right company and finding the right company to invest with is where the whole mistake is made because a lot of them out there tend to be legit at first until you’re involved with them , I have actually been mining with one mining pool for over 8 months and could see my profit earnings but for me to withdraw my earnings that’s where the issue is , I have over 24btc in the website that I could not access any longer .. I wanted to overlook it at first and never get myself in wolves in any type of online investment but my wife never let go even when I did , she somehow managed to come across a Recovery hacking agent “ Virtualhacknet @ gmail . Com “ on LinkedIn . I explained every details to them and provided them with every information and aid they needed and at the very end of the week , I and my wife were both filled with smile and relief , finally we recovered back our Bitcoin .. I’m very grateful to the Agent that was assigned to us , he was very kind and explained every little detail to us .. you should do well to contact them on telegram @ VIRTUALHACKNET for a swift response .

Reply

2/18/2023 07:21:11 am

Excellent article! Thank you for your excellent post, and I look forward to the next one. If you're seeking for discount codes and offers, go to couponplusdeals.com.

Reply

Hannah Matilda

2/19/2023 02:10:37 am

I’m recommending ROOTKITS RECOVERY FIRM for their wonderful and excellent recovery services given to me when I was being scammed of my investments by a Cryptocurrency investment platform.. I lost all my invested funds a total of £893k to this company, I had been a member to their platform for about 6 months time investing all i have had with the company. I finally got to know that this has been a fraudulent company when I wanted to withdraw a part of my profits into my bank account. I made several attempts to process the transaction but it was declined, on reaching out to the support desk for assistance to withdraw my funds they demanded for some company fees and charges so they could grant me the access to the withdrawal. i did paid for the fees as well but i still could not make the withdrawal swiftly, this really puts me in a detrimental state.. I was really shattered about the whole situation as i do not know how to go about it again, then i had the thought about getting a hacker to help me out as i felt screwed by this company. and that was how i discovered the recovery firm that help me to get all my funds back from the crypto company, i came along a lot of positive reviews about them here so i trust the process and contacted their email R O O T K I T S 4 @ gmail . Com for assistance and they were excellent, I’m really thankful for their support and service as they restored Happiness back into my life. i recommend this team for anyone who wants to recover what has been lost to scam companies here is their Telegram also - ROOTKITS7

Reply

desire Faes

2/19/2023 09:45:43 am

Hackers are a blessing to us.. how they do what they do it’s a thing of mystery to me but we’re really blessed to have them in our society, I mean how would I have been able to recover my $87k Bitcoin that I was ripped off by those scammers that preyed on my lack of experience in the crypto space!?.. I have seen plenty of people who was also able to get back their money through the services of professional hackers and if you’ve been scammed by one of those fake investment companies and you’re looking to take back what belongs to you then JETHACKS is the answer , thousands of individuals have been including me have been giving a second chance through their excellent service and you can contact them on

Reply

Atin Fernando

2/20/2023 11:26:23 am

This post is for persons seeking to recover all of their lost funds to online scams , for RECOVERY OF LOST BITCOIN,RECOVERY OF ANY LOST CRYPTOCURRENCY, if you are seeking--> RECOVERY OF LOST FUNDS TO RIPPERS IN FOREX TRADES AND BINARY OPTIONS CONTACT [Wizesafetyrecovery @ Gmail com] . I had my blockchain wallet spoofed by merciless rippers , they were able to get away with 4.0147BTC from my wallet , this made me very sad and depressed as i was desperately in need of help , i made my research online and came across a very credible recovery agent with the adr. [Wizesafetyrecovery @ Gmail com] . The hack agency helped me recover all I lost and also reveal the identity of the perpetrators , that's why I'm most appreciative and also sharing contact addr. for anyone in a similar situation, contact the recovery specialist with the address above .

Reply

Rita Jackson

2/22/2023 04:57:52 am

HIRE A PROFESSIONAL HACKER FOR REMOTE PHONE SPYING AND CRYPTOCURRENCY RECOVERY

Reply

Eric Schuchardt

2/21/2023 02:56:11 am

I believe this information will be very useful to someone out there because someone might still be in the same situation as me. few months back, I was scammed of my funds, I invested into an online trading company and I was scammed of my savings and profits. i did everything possible to resolve this with the company but it was as if I’m making another mistake because i had to pay for the fees which they requested and still couldn’t have the access to withdraw my investment. I found this page some days ago but didn’t take it seriously. because I saw different comments and I got confused and I don’t know who is real or not. But i later summon the courage to contact a Wizard here his name ROOTKITS i sent an email to him on ROOTKITS 4 @ G M AIL . COM for help and they came through. thanks again to this great team who by their level of experience recovered all my funds back just when I taught I’ve lost it all I’m grateful for your help.. to anyone who might have been in such situation I encourage you to reach out to ROOTKITS as well they are on telegram with the user; ROOTKITS7

Reply

Rita Jackson

2/22/2023 05:33:53 am

HIRE A PROFESSIONAL HACKER FOR REMOTE PHONE SPYING AND CRYPTOCURRENCY RECOVERY

Reply

2/22/2023 02:22:15 am

Asset recovery firm richard pryce wizard is a reputable expert in smart contract audit on stolen cryptocurrency and recovery. They offers experience, intelligence, and expertise in Digital asset recovery. I believed this expert, who helped me recover my money from a phoney investment scheme,. I had been working with the unregistered trader using mt5 in bitcoin investments, and throughout the course of this, I lost a lump sum. As it is quite difficult to locate someone to provide financial support, this left me feeling very dejected. I shared predicament with asset recovery team at richard pryce wizard, and within a few working days, i have just detached 18 btc from my trust wallet. I am overjoyed because I had been left without communication. Please stop worrying , because richard pryce wizard is here to assist everyone. They want to help you because I’ve used and seen how amazing their services are, thus they are willing to do so. contact and open a detailed case with asset recovery firm via Email: richardprycewizard2016@gmail.com or whatsapp +36302513218

Reply

Rita Jackson

2/22/2023 03:57:55 am

Have you ever thought your partner was cheating on you – even when they weren’t – you’re not alone. It can be a very stressful situation to find yourself in. And while it may seem like trust issues are what’s leading you to constantly worrying your partner is cheating, experts and research say it could point to something deeper than that. It’s a slippery slope, but the good thing is you can overcome it. I found myself in those shoes and could not get myself enough peace until I sought out a professional-hands on, I contacted (( RECOVERYBUREAU at CONSULTANT . COM )) to help access my spouse device so I can get proof. Well it turned out it wasn’t just thoughts, I was being cheated on. I got complete access to my spouse device from mine, messages calls chats everything.

You can contact them to clear your mind from worry

Reply

Rita Jackson

2/22/2023 04:56:09 am

HIRE A PROFESSIONAL HACKER FOR REMOTE PHONE SPYING AND CRYPTOCURRENCY RECOVERY

Reply

sina jim

2/22/2023 06:59:36 pm

i was scammed by a forex website Tradestation to be precise to the tune 0f $153,700. was firstly informed that ill be able to withdraw 11.5% roi weekly from the second month of membership and if i invest more, the roi could be increased by 4.5%. i later invested $17,300 to make it a total of $170,000. After the whole investment, i was only able to make a withdrawal for the first week, then after was told to invest more to withdraw more but told them that i wasnt interested and wanted to continue with the current roi plan but was told that i wouldnt be able to withdrawal till i invest that was when i saw the red flag and told them i wasnt interested anymore but not surprised by their act i couldn't make any withdrawal again. I told a friend and he referred me to kevinmitnickcyber@gmail.com and within a week of our contact I was able to withdraw all my funds in addition to my roi for the whole planned duration of 9 months advance payment. I can't still believe that this happened, all thanks to kevinmitnickcyber@gmail.com , you can get in touch with them if you need any of these services, they’re the best out there. kevinmitnickcyber@gmail.com. I'm so grateful.

Reply

Broome Harrison

2/23/2023 12:12:26 am

I have been a victim of crypto scam too and i know how it does really feel to loose your funds especially when you remember that hard work and sleepless night put together, Absolutely made enough money from Real Estate Investments before i got to meet a colleague over on LinkedIn who introduced me into Crypto investment company, where investors are to benefit from the company’s high return profits. i went on and invested some funds with them without doing my due diligence over the company’s profile. the devastating part of this story is that i got some loan to enable me invest in this platform in order to make some profit.. not being aware that i was dealing with some group of con artist, the investors made everything look so real in the way that you won't believe it all scam. it was going well till few weeks ago when i requested for a withdraw, i was instructed to invest more till i reached the withdrawal limit then i can make withdrawal.. i did invested more but when i got to the withdraw limit as they said i was still not able to make my withdrawal rather they kept persuading me to make more investment. i stopped talking to them and started looking for a way to recover my money. then fortunately i came across R O O T K I T S 4 @ G M A I L . C O M ( ROOTKITS RECOVERY FIRM) on the goggle where people has some good reviews about the team, they did put an amazing smile on my face by getting back my lost funds. how they was able to do this i don't have the idea but i must say they are some expert this field. i will keep on recommending your service to anyone i know. you can as well contact him through Telegram: ROOTKITS7

Reply

2/23/2023 12:21:31 pm

Trackforcrecovery..org expertise is a consulting fund recovery agency that specializes in the field of wealth and asset recovery, has narrowed its focus to forex scam and cryptocurrency scam Consult track force recovery firm they specializes in helping investors and companies who have lost sum of funds to unregulated binary option broker and other online scam

Reply

Zack Lome

2/23/2023 10:16:27 pm

Good day everyone my name is Zack lome This is my first time putting down comments like this on here. I think i need to put some lights into someone's life today. I have a top-notch hacker that has worked so rewarding for me. I am blessed today by his work so far. He's a Guru i will never lay off. He programmly erase all my unforgettable credit issues, my inaccurate missed payment dates was settled, my park rate and eviction notice too got cleared off. And this put me in a better place to rearrange my credit history appropriately with a wonderful trademark. You can be next, this is my biggest gift ever to everyone. Get the email and mail whatever problem you are facing right now directly to a personal hacker today. Email: Magnetonetwork.www@gmail.com I am so lucky to have scape through life difficulty with my credit report

Reply

Daniel Higgins

2/24/2023 10:33:45 am

It baffles me when these scammers out here think that they could just rip off anyone they come across and go scot-free , well I never gave up on my funds when I learnt that I have been scammed on an online investment . I was added to this telegram group in September 2022 and they only discussed about Bitcoin and stock market, on there you’d see a lot of people who claim to invest with the company and have earned lots of profits with them over the years , but all that was happening there was just to deceive us that were added to the group and I was so unlucky to fall for their trick , started investing with the company as well in October after I thought I had studied the group and how everything works , I ended up loosing over £617,000 to them , this was just my actual investment not to talk of the said earned profits that I don’t even know if any of that was true .. after learning that the company was a scam company that never even existed , I swore never to back down till I get my funds back , I then hired a private hacker who helped infiltrate their website and track down the transactions with the proofs I provided to them .. I am very glad we pulled this off and at the end of it all I recovered all my money and made sure their website was exposed so that others won’t fall victim to their tricks . Contact Email : KNIGHTHOODBOT @ GMAIL DOT COM .

Reply

Hank Tamas

2/26/2023 01:06:14 pm

I saw an opportunity to invest in cryptocurrency about two months ago and I took my chance. I contacted a broker who I saw videos on YouTube and I invested a huge sum of money into Bitcoins & Ethereum hoping to gain a huge profit, while I was waiting and after some weeks, I saw on their website that I have doubled my money. I tried to make a withdrawal as I needed money to foot my bills but the broker insist I continue to invest or pay some money to withdraw my funds, I realize at that point I was being catfished. A month after, I saw a post about SPYWEB CYBER, a funds recovery company and I contacted them immediately, to my surprise SPYWEB was able to recover my bitcoins and Ethereum after I provided the necessary information for them. They were able to retrieve all my money and gave me the scammer's location which I sent to the authorities and these people were apprehended. I’m super grateful for SPYWEB CYBER and wish to recommend them to everyone out there.

Reply

‘’I need back my money’’.. ‘’ I need my family back’’ that was the only thoughts I had for months and thanks to Albert Gonzalez Wizard , I got back my family and my money .. I was depressed for months as my husband and 5year old left me to go live with his mum cos I used all our savings to invest in crypto investment company, took a loan and sold my car too in a bid to pay the withdrawal fees I was desperate very desperate and I nearly lost everything but thanks to Albert Gonzalez Wizard , he recovered my £861k from those heartless scammers .. it’s a long story but at the end I was happy , I am forever grateful to him.. This has made me to cross paths with him through a review on here and am writing this review here in hope that it will help someone out there , if you’ve been in a similar situation please reach out to Albert Gonzalez Wizard, he is a very competent and reliable hacker . The contact details are as follow , email: info@albertgonzalezwizard.online or albertgonzalezwizard@gmail.com whatsapp +31685248506 AND Telegram: +31685248506

Reply

Ethan Lisandro

2/27/2023 02:53:45 am

The first time i had taught of investment was after I met a lady online who makes me feel comfortable and always talk me into self-employed business, I decide to always seek for her advice and she gave me a broker site where I can invest and have profit within a short period of time, I do really trust her but after I invested into the crypto site I discover she was behind everything and I have lost all my savings to the crypto company she introduced me into, how she gets to lure me into this with her sweet words i couldn’t tell, she ruined my life and I couldn't be myself anymore. So i decide to seek for help, omg... any attempt i made i get more scammed couldn't figure out who was real and who wasn't and at the end of it all i came up with a decision that nothing on the internet is real again. after about a month i gave up on recovery of my money i sent to broker site by bitcoin payment which is up to $115,992 in total. one day at work i was kind of less busy and i was on Twitter following up some twit, i came across ROOTKITS RECOVERY CENTRE, i read a lot of good twit about them with the following contact address R O O T K I T S 4 at G M AIL . C O M or via TELEGRAM Handle ; ROOTKITS7 i waited till i got home after work and we talked for long and i decide to put him to test, to end the whole story, i got everything that i loss on my new blockchain wallet, i was scared at first until he explained to me, a big thanks to the only real recovery hacker online and that's ROOTKITS who i have experienced his professional work actually.

Reply

Charlene Stones

2/28/2023 02:39:57 am

Thank Goodness I finally got my funds back . I had about $300k+ locked out in a blockchain trading wallet and ever since then I couldn’t access it , I have laid multiple complaints to the blockchain support regarding the issue but each time they kept on telling me that the issue will soon be resolved and I have been waiting on them for over 3 months but still yet nothing has been done about it , I tried trading the Bitcoin on there and move my money directly to my bank account but all my trial was to no avail and of course that was frustrating because I don’t know how I’d be the one to deposit my funds to the trading wallet but can’t take it out when I choose to , well after many trials and error I had to hire a Recovery agent whom I read about on google , on how he recovered most people’s funds successfully … just within one week he was able to move the whole funds to my other wallet where I could access it easily , how he did that still surprised me but I guess it’s his line of work and he knows better , that’s why you should give the Agent a try and see the wonders he does yourself . Contact Email : VIRTUALHACKNET @ GMAIL dot COM

Reply

Jonathan Hughes

2/28/2023 03:30:26 am

After going through all the comments, I found some painful,while some were funny. My conclusion is that most people will still get scammed just because they cannot read in- between the lines. I had also been a victim in the past. Now i have a resident hacker who helped me recover my funds(100% guaranteed). He also helps me vet any bitcoin investment i want to make.He gives me the go ahead or tells me to not make the investment,and so far, it had been worth it..The truth is that only an hacker can know a bitcoin scam. You just have to make one your friend. In this digital world, like we have family Doctors, Lawyers that we put on retainers, i always advice people to have a trustworthy hacker on their side.. You can contact them via; Darkrecoveryhacks@gmail. Com. Thanks for your guideline’s which enabled me to have my funds back

Reply

Kyrenia Androula

2/28/2023 06:10:04 am

I’m not the type that will get conned and when that happens I don’t go down without a fight, this time though i made a couple investment with an organization that appears to be fraudulent one, they almost did get away with all my investment as i was never able to make withdrawal or take any of my profit. this action made eager to know whats going wrong.. i wrote a message to the company clients support system but they failed to rectify the issue at that point i know already it’s some sort of scam going on but thanks to ROOTKITS RECOVERY FIRM i contacted them over on TELEGRAM; ROOTKITS7 for their intervention in getting me out of this mess of an investment. I’ve had several professionals help me in situation but this was a new challenge, I gave them what was necessary and they worked quite quickly, I’m just glad I got all my investment fully refunded, you can also reach out to them on email through the following add - r o o t K i t s 4 @ g m a i . c o m for help if ever been in a similar situation as mine.

Reply

barbara leon

2/28/2023 10:10:01 am

I recently lost access to my bitcoin wallet through some facebook hoax and thought my coins were lost forever. But then I found a bitcoin recovery company called Techspace and decided to give them a try. I couldn't be happier with the service I received. The team at Techspace was extremely knowledgeable and professional. They kept me updated throughout the recovery process and were able to successfully recover my lost coins. What impressed me most about Techspace was their commitment to customer satisfaction. They truly went above and beyond to make sure I was happy with the outcome. Their pricing was also very reasonable compared to other bitcoin recovery companies I had initially tried. If you're in need of a bitcoin recovery service, I highly recommend Techspace. They're a trustworthy and reliable company that gets the job done right, you can reach out to them on email techspace (at) cyberservices (dot) com or WhatsApp +1 (346) 895-2434.

Reply

Harold Muhney

2/28/2023 11:01:07 am

The Reality of life always sets in at some point in life and no matter how much we try to deny it, we can’t . I know myself to be a sore loser who doesn’t know when to give up and that nearly killed me when in my dealings with the fraud company I was investing with .. deep down I knew it was a scam , I could see all the signs but then I had already Invested over $800k including the fees .. how was I going to turn back at this point ??.. I couldn’t do that and all I could think of was getting back my money , I was thinking it’s one last fee which turned into another one and another one until the day I came across a post on LinkedIn about how JETHACKS has helped individuals recover their money from fake investments companies so I quickly reached out to him on Telegram: Jethackss … I shared all the necessary details which enabled the JETHACKS RECOVERY to launch the levels 100+ primes initiative exercise and my funds was retrieved to my wallet within 48 hours THANKS to JETHACKS . You can further reach out to JETHACKS on Email: jethacks7 @ GMAIL . COM

Reply

ROSE MYSTICAL

3/1/2023 06:10:37 am

LOST YOUR CRYPTO? YOU WANT TO RECOVERY YOUR STOLEN BTC? CONTACT HACKANGELS.

Reply

ROSE MYSTICAL

3/1/2023 06:12:03 am

LOST YOUR CRYPTO? YOU WANT TO RECOVERY YOUR STOLEN BTC? CONTACT HACKANGELS.

Reply

Rodolfo Marino

3/3/2023 01:51:02 am

I think i need y'all to know about ROOTKITS a Global recovery firm. i got scammed by a forex website, almost lost a very huge amount of money to the tune of $415,000. was firstly informed that i will be able to withdraw 15.5% ROi weekly from the second month membership and if i invest more, the ROI would be increased by 5.5%. i did every thing they asked me to do but they only allowed me to make a withdrawal only at first week we started our partnership, then after was told to invest more to withdraw more.. i as well did invested a lot just as i was directed but after that that they decline me to withdraw my investment together with the profits, when i got to noticed this Red flag and told them that i can’t provide the money they are reuesting and i wasn’t interested anymore, i told a friend what i was going through luckily enough he introduced me to The ROOTKITS RECOVERY FIRM and just within 3 days of our contact over on his Telegram @ ROOTKITS7 i was able to recover all my funds in addition to my roi for the whole planned duration of 4 months advance payment. i can’t still believe how all this happened, All Thanks to this Team, you can as well get in touch with him if you in need of their assistance to get back your lost funds He’s one of the best out there. Email contact: ROOTKITS 4 @ G MAIL . C O M I’m really grateful for all they did for me and my family.

Reply

3/4/2023 03:29:24 am

If you feel Bitcoin to the wrong address, you can check the blockchain to see if the transaction has been confirmed. If it has not, there may be a chance to cancel the transaction and recover your funds. I have seen and peruse about cryptocurrency scams remind me of some heart ache experience when I lost 1.6 million: 1,600,000. in numbers to fake online crypto investment scam when invested huge amount of money. I searched online came across recovery expert who I contacted via (cyber@qualityservice.com

Reply

Jerome Wes

3/4/2023 05:16:26 am

Greener pastures will always come with snakes and one has to stay ready to fumigate all the time .. it’s really sad that there are people in this world who would take what doesn’t belong to them .. what someone has worked all their life to acquire and you’d just sweep in to extort that from the person with fraudulent means .. how do they sleep at night ?? .. knowing what they have done .. these are peoples life savings for gods sake !, well I guess life is just unfair … if you’ve lost your money to any fraud investment company , don’t cry too much you’re not alone in this, many individuals have lost there money to scams like that including me but there’s a way to get it all back .. I contacted a very reputable and well know hacker JETHACKS .. my funds of €210k was successful recovered through the services of JETHACKS whom I reached out to on Email : jethacks7 @ GMAIL .COM after I had witnessed his recovery service firsthand through my friend who had his funds recovered by the Great hacker . You can further contact JETHACKS on Telegram: Jethackss

Reply

Alan Baldwin

3/4/2023 06:57:04 pm

I once got convinced by Binary investment company that they were the real deal. What strikes me the most about them was the profit I was able to make in the short term. I eventually needed money to attend to an urgent financial situation only for Forexbinaryltd to deny my withdrawal request after my contract with them expired already and then went on maintenance indefinitely. I became so worried that I had to take more loans to attend to my needs. I was stuck waiting for Forexbinaryltd's approval to make withdrawals but all to no avail. They stopped responding to my emails and other forms of correspondence. Checking online to see if this was normal because I got confused then I saw so many negative reviews of Forexbinaryltd. I knew for a fact I have been scammed. I deposited a total of 326k euros. I was desperately in need of help and did an intensive search on lost investment recovery companies. After I was able to sieve out a number of recovery companies, I decided to carry on with ROOTKITS RECOVERY FIRM after seeing reviews that the company helped recovered monies lost to fraudulent companies. A forensic analysis of my dealings with Forexbinaryltd was carried out and my claim was found to be legitimate. I got all my funds money back through this international wealth recovery company. Without a doubt, Forexbinaryltd & TRADENIX are scam firms and they do not have your interests at heart. Send a mail to ROOTKITS4 @ GM A IL . C O M or Telegram: ROOTKITS7 if you are a victim of fraud as they will help to recover your lost

Reply

Mason Kern

3/6/2023 12:19:12 am

That will be very bad of me if I ever withhold information that might help several people out there . I am on here to commend the work that VIRTUALHACKNET RECOVERY SERVICES did for me after Loosing my money to an investment scheme that I came across online . This company claimed to have the best technology to mine and trade Bitcoin for its investors and bring back a fixed return every week depending on how much you invested .. the first couple of weeks was calm and profitable as well , I was very excited about that and out of that excitement I invested a total of $114k but never got my returns as it used to come .. 2 weeks went by and still nothing , couldn’t access the website anymore even though my login credentials were all right , that’s when I knew I had been scammed , a week after I saw a comment about a hacking agent “ Virtualhacknet @ gmail dot com “ on a TikTok about Bitcoin and scam*ers and with a lot of people on there testifying about him and how good he is .. I had to give him a try and guess what ? All my stolen investments was recovered back without a single dime left behind .

Reply

Luke

3/8/2023 03:31:17 am

The illuminati is an elite organization of world leaders, business authorities, innovators, artists, and other influential members of this planet. Our coalition unites influencers of all political, religious, and geographical backgrounds to further the prosperity of the human species as a whole. We are inviting you to Join the Great illuminati Brotherhood Organization now to become a Billionaire, For Fame, Power, Business, Lucrative Position, Each new member will receive $10,000,000 USD as benefit and $50,000.00 USD as monthly payment and A New house in any country of your choice. and a Golden Mercedes Benz- SUV 2022 MODEL, if you are interested reply email us at {lukemartins924@yahoo.com or what'sapp +56 9276 82170}

Reply

Amelia Requena

3/8/2023 04:29:40 am

I’m never going to invest in any Cryptocurrency investment platform again, please everyone should beware of this very group of investors as they might have your life ruined, i got scammed by two different crypto companies.. it was indeed an awful moment losing such huge amount to this companies that has seized both my investment and my total profits that i was able to make with them within the 3months period of my membership. I was only allowed to withdraw once by one of the companies and that was at the very start of my investment. I already bored the lost and moved on Luckily enough after sometimes I was following up an article that discusses about the advantages and disadvantages of crypto investments over there i read a lot of comments about hackers that can help to recover back lost asset to crypto companies. amongst all I was deeply convinced to contact ROOTKITS RECOVERY FIRM, as a lot of individuals wrote pretty good comments about them. we got in touch after i had contacted them through their email address: R O O T K I T S 4 @ g m a i l . C o m , He replied me once and gave me some instructions on what to do and we kicked off the process, to my greatest surprise he got back my funds bit after bit just till it was complete just the way i sent to their different wallet addresses, this dude was such an expert in this field I’m happy he came through for me, you can direct your problems to him also via his Telegram; ROOTKITS7 and be assured of great outcome, don’t forget to say thanks to me later.

Reply

Micheal Stewart

3/10/2023 03:22:53 pm

One really needs to be careful when it comes down to Crypto investment, I had this very problem with a Binary investment company that had all my earnings altogether with my capital investment frozen , even though i honestly believe that there are legit companies out there that you can invest with but the question is how do you be sure about them before committing. i had been a victim to this scammers that claim to be investors, it was actually like a movie to me how the whole thing happened, i couldn’t believe things like this exist until it happened to me. However.. when it result that i can not withdraw my money i started looking for a way to recover my money back, Luckily enough i met a recovery agent R O O T K I T S 4 @ G M A I L . C O M Through online via peoples recommendation, he was such a savior to me, he was able to access the scammers website i made investments to even when i found out that the website has been down and he helped me recover my funds back i couldn’t be more grateful . you can catch up with him also on Telegram @ ROOTKITS7

Reply

Wayne Schofield

3/11/2023 05:25:52 pm

the money makes things happen and can make people do certain inhumane things like extortion, blackmail, robbery and even date online just to rip people off their hard earned money just like we've seen plenty of times on the numerous stories shared by victims of fraudsters on the internet and i pray karma catches up to them sooner than later.... I know lots of people have fallen prey to online prey to know and have sent money through so many mode of transactions like crypto currency ( Bitcoin), wire transfers, cashapp and so much more but thankfully there’s a way to recover back sent crypto currency as long as the transaction wallet and details are available.. I had gotten to know that Bitcoin recovery is entirely possible through the expert intelligence team of JETHACKS RECOVERY TEAM of underground hackers .. they are the best team and very professional.. I had my funds fully secured and recovered to my wallet in 36 hours after the recovery exercise was initiated and no upfront payment … they are very professional and ethical so you should do well to contact them on either TELEGRAM: Jethackss

Reply

Transparency is the key in business and human dealings, i got Albert Gonzalez Wizard, on here thinking that he was one of these fakers but to my surprise he turned out to be the realest i have ever met, i hired him for two different jobs restoration of my BTC i sent to fake investment company and monitering accress to see what my boyfriend is doing on his Whatassp and he did both perfectly. If you are still contacting unethical and fake hackers then you will be a clown after seeing me drop this comment about a true hacker..His contact informations is Email: info@albertgonzalezwizard.online or albertgonzalezwizard@gmail.com WhatsApp: +31685248506 Telegram: +31685248506... Goodbye……

Reply

Becky Alfred

3/13/2023 11:12:05 am

Looking for a credible hacker to retrieve your lost investment or get rid of bad records ? VIRTIALHACKNET @ gmail dot com is the best team out there to work with , they’re trustworthy unlike others who might choose to blackmail you after or threaten to sell your credentials on the dark web . I have heard bad stories about hackers and have also heard the good side of them . I’m a witness to both the good and bad ones after losing my loan that I took out for my business to an online investment scam , but with the good lord on my side I was able to recover it all back and take the loan down from my records . It took them barely a week to get all this sorted out meanwhile I’ve been stressing myself all this while , but then I was wasting time with the wrong people who I don’t even think in any way knows what hacking is all about . VIRTUALHACKNET has the best ha*kers who specialises in this , you can tell by the top notch services and guides they offer . I hope you don’t make the same mistake I did at first ‘cause that will be a huge mistake you’d regret after . I still have few outstanding jobs that I’m working on right now with the team and so far they have been fabulous , I think you should get in touch with them if ever you find yourself in need of their services. Telegram @ Virtualhacknet .

Reply

Becky Alfred

3/13/2023 11:13:22 am

Looking for a credible hacker to retrieve your lost investment or get rid of bad records ? VIRTUALHACKNET @ gmail dot com is the best team out there to work with , they’re trustworthy unlike others who might choose to blackmail you after or threaten to sell your credentials on the dark web . I have heard bad stories about hackers and have also heard the good side of them . I’m a witness to both the good and bad ones after losing my loan that I took out for my business to an online investment scam , but with the good lord on my side I was able to recover it all back and take the loan down from my records . It took them barely a week to get all this sorted out meanwhile I’ve been stressing myself all this while , but then I was wasting time with the wrong people who I don’t even think in any way knows what hacking is all about . VIRTUALHACKNET has the best ha*kers who specialises in this , you can tell by the top notch services and guides they offer . I hope you don’t make the same mistake I did at first ‘cause that will be a huge mistake you’d regret after . I still have few outstanding jobs that I’m working on right now with the team and so far they have been fabulous , I think you should get in touch with them if ever you find yourself in need of their services. Telegram @ Virtualhacknet .

Reply

Recovering lost Bitcoin can require unique hacking skills and expertise that are possessed by only a handful of professional hackers. While there are many recovery websites out there, it's important to be cautious as 99% of them are operated by scammers who try to appear legitimate. Instead, it's best to seek out a trusted hacker like Albert Gonzalez Wizard who can help you recover your funds. They were able to recover $138k worth of BTC that I had lost to bitcoin mining. To get in touch with Albert, you can email them at info@albertgonzalezwizard.online / albertgonzalezwizard@gmail.com or message them on WhatsApp at +31685248506 Telegram: +31685248506

Reply

Laura Mackenzie

3/15/2023 06:38:23 am

How do i start this review? Honestly I’m not the type that enjoys writing much but this time i have to let the world know about this genius that helped me during the time i was put in a really serious situation by this Cryptocurrency Investment Platform that turns out to be Fraudulent , i lost almost everything i had on the cause both my Huge Inheritance that i benefited from the sale of my late Grandfather’s estate as i taught this will equally be a good way to investment money. sadly i ended up losing every cent to this Cryptocurrency platform i was never allowed to take my profits both with my capital invested funds of $842k USD. Several actions i took to get my money back but all to no avail. i felt really shattered and broken as this was so depressed. then i taught about a more advanced technique person to help me out with this very situation and that was how I read many good reviews about ROOTKITS RECOVERY FIRM online. 3 days after I contacted, I’m very proud to say they did recovered all my money from this very Platform, I sincerely acknowledge their relentless efforts and urge you to contact them if ever been in a similar situation as mine and wish to have your funds back via their contact Mail R O O T K I T S 4 @ G M A I L . C O M - Telegram: ROOTKITS7

Reply

Antonio Derek

3/18/2023 09:52:06 am